We’re pleased to announce the release of Gravwell 2.2.1! For a point release, it’s got some very cool new features; read on to learn what we’ve added.

Gravwell Community Edition for Docker

We’ve had several requests for Docker images, and with 2.2.1 we’re happy to announce official Gravwell Community Edition images for the core installer and the Netflow, CollectD, and Simple Relay ingesters!

Getting started it easy; first, launch the core Gravwell container:

docker run -d --rm --name gravCE gravwell/community:latest

Now use `docker inspect` to figure out what IP Docker has assigned:

docker inspect -f '{{range .NetworkSettings.Networks}}{{.IPAddress}}{{end}}' gravCE

In our case, it was 172.17.0.2. Point your web browser at that IP and upload your Community Edition license. You should now have a ready-to-go Gravwell instance with the Simple Relay ingester pre-installed and listening on TCP ports 7777 (newline-delimited logs) and 601 (syslog) as well as UDP port 514 (syslog).

If you want to add a Netflow ingester, simply use the IP we found before and start a netflow container:

docker run -d --rm --name gravNetflow -e GRAVWELL_CLEARTEXT_TARGETS=172.17.0.2 -e GRAVWELL_INGEST_SECRET=IngestSecrets gravwell/netflow_capture:latest

The Netflow ingester listens for Netflow records on port 2055 by default.

To keep this blog post short we’ll stop there, but watch for future posts where we’ll go more in-depth on running Gravwell CE in Docker, especially how to run things more securely.

Security Note

This method of launching a Docker container IS NOT SECURE. Anyone with access to your Docker can see the secrets, and we are using a set of TLS certificates that everyone in the world has access to. Please, DO NOT RUN THIS IN PRODUCTION without regenerating the TLS certificates and properly configuring unique secrets for your indexer, webserver, and ingesters.

We recommend using Docker’s “secrets” functionality (see https://docs.docker.com/engine/swarm/secrets/#read-more-about-docker-secret-commands) to store the Ingest Secret in a Docker secrets file, then rather than passing GRAVWELL_INGEST_SECRET=IngestSecrets, you can say something like GRAVWELL_INGEST_AUTH_FILE=/run/secrets/ingest_secret to avoid leaking the secret.

Tag Wildcards

One of the most exciting additions in Gravwell 2.2.1 is the introduction of wildcards for tags in search queries. You can now run a search over all data in the system:

tag=* grep foo

Or find any entries tagged ‘testA’, ‘testB’, or ‘testC’, but excluding ‘testD’ or ‘testZ’:

tag=test[ABC] grep foo

We support the standard globbing wildcards as described in http://tldp.org/LDP/GNU-Linux-Tools-Summary/html/x11655.htm, so go ahead and experiment!

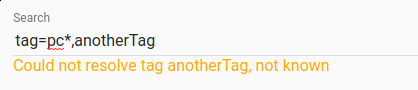

Gravwell will now also check if the tags specified match any actual extant tags as you type them. In the image below, we see that the “pc*” pattern is fine (in this case, it matched “pcap”), but “anotherTag” is not a tag known to the system.

FileFollow Ingester Enhancements

The FileFollow ingester has had two major enhancements in 2.2.1.

First, setting the ‘Recursive’ flag on any Follower element in the config file will make the ingester follow any files matching the pattern in the base directory or its subdirectories.

Second, the ‘Ignore-Line-Prefix’ option allows individual Followers to ignore lines beginning with a specified string; this is useful for ignoring comments in certain log formats.

Here's an example Follower config showing both new options:

[Follower "myFollower"]

Base-Directory="/tmp/test/"

File-Filter="{*}"

Ignore-Line-Prefix="#"

Recursive=true

Conclusion

With our new Docker images and the addition of tag wildcards in searches, it’s even easier to get Gravwell up and start exploring your data. You can find more information in our documentation (https://documentation.gravwell.io), and don’t hesitate to email support@gravwell.io with any questions!