Gravwell Resources

Learn about everything from customer success stories, product info, to viewpoints from

the core team.

Blog

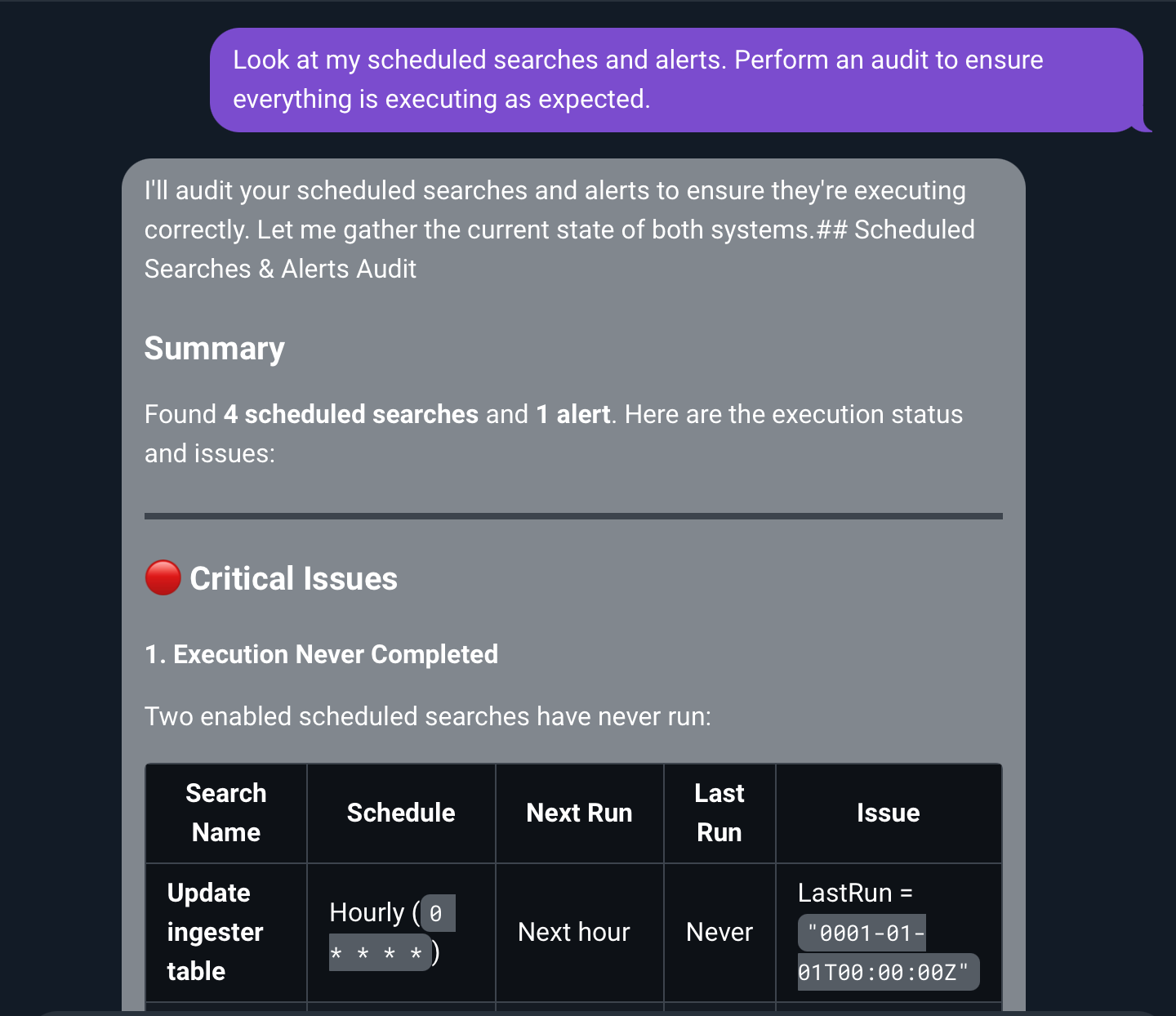

Today, we’re excited to announce a new and improved Logbot, Gravwell’s AI-powered security data assistant, as part of Gravwell v5.9. The release adds deeper platform integration, faster natural-language investigation workflows, instant playbook and automation generation, and bidirectional AI integrations through MCP. These upgrades bring AI-driven assistance directly into daily workflows, helping practitioners get to answers faster and do more with complex security data.

All

From Raw Data to Answers: Meet the New Logbot

The Shift from SIEM to Cybersecurity Data Platform

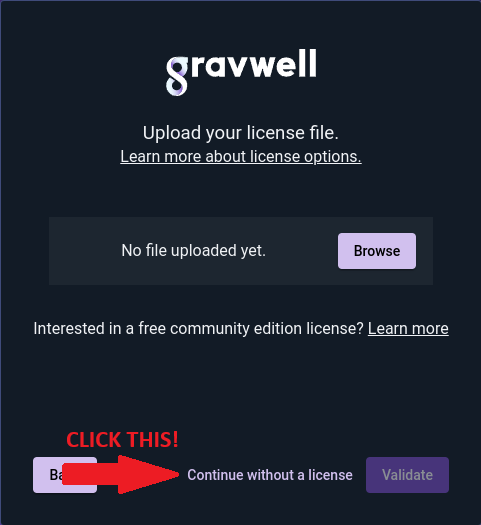

Gravwell 5.6.0 New License Tiers

Gravwell 5.4.0 New Feature: Updated Eval Module

Gravwell 5.4.0 released - New alerting features

Using lookup to invert matches

Accelerated Filtering with Eval

DOCUMENTATION

All Gravwell documentation is open to everyone.

If you’re just starting out with Gravwell, we recommend reading the Quick Start first, then moving on to the Search pipeline documentation to learn more.