Gravwell Resources

Learn about everything from customer success stories, product info, to viewpoints from

the core team.

Blog

Gravwell 5.7.0 introduces Logbot, a Gravwell assistant to help understand logs. Log analysis can feel like deciphering a foreign language–tedious, time-consuming, and frustrating. While we don't have a choice on how any given vendor formats their logs, we don't have to go it alone. Logbot is here to help reduce time reading technical documentation and get right into analysis

All

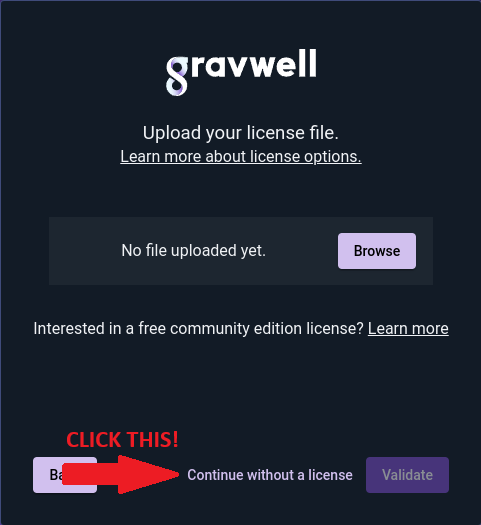

Gravwell 5.6.0 New License Tiers

The basics of Gravwell API Access Tokens

Announcing Gravwell 5.0.0 Orion

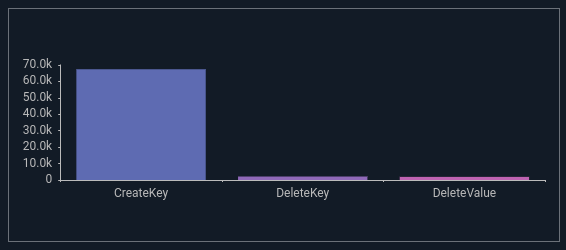

What's in a Sysmon Event - Windows Registry EventIDs 12, 13, 14

Announcing Gravwell 4.2.0 - Voyager Release

Announcing the Gravwell Sysmon Kit

DOCUMENTATION

All Gravwell documentation is open to everyone.

If you’re just starting out with Gravwell, we recommend reading the Quick Start first, then moving on to the Search pipeline documentation to learn more.